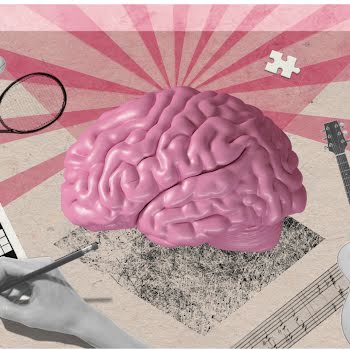

Does facial recognition technology mark the end of privacy as we know it?

By Amanda Cassidy

24th Jan 2020

24th Jan 2020

Imagine being tracked everywhere you walked over the past month without your permission or knowledge? A scary idea that has hit headlines this week after London Metropolitan Police announced the rolling out of live facial recognition technology. Amanda Cassidy reports.

MacKenzie Fegan boarded her flight from New York to Mexico without ever showing her passport. She was simply told to smile into a camera. The 34-year-old was astonished and took to social media to tweet the airline (JetBlue) asking when she had ever given permission for her image to be used in this way. The tweet went viral. “Did facial recognition replace boarding passes, unbeknown to me? Did I consent to this?” she wrote to the airline.

Related: Why we really need to talk about Facebook

We know that technology is expanding at the most rapid pace. Computers have grown much better at recognising faces in recent years unlocking a myriad of applications for security, from tracking criminals to counting truants. But as cameras appear at some of the most unlikely places across the globe, some have raised fears about the extent to how much of our privacy we can lose. Perhaps it is great for keeping terrorists at bay but do you really want to be tracked coming out of your hospital appointment?

Living with facial recognition technology just got a step closer after the London Metropolitan Police Service announced it was rolling out identity confirming cameras in specific locations across the UK capital. Pilot operations were conducted in London and South Wales. In a statement Met Police said they were using it “to help tackle serious crime including serious violence, gun and knife crime, child sexual exploitation and help protect the vulnerable”.

But at what cost?

Does the end justify the means?

Sarah St Vincent is a researcher at the National Security and Human Rights Watch group. She says there are issues cropping up with accuracy that might spell disaster. “There is a tendency among governments, even when they have a legitimate goal, to view new technologies as some kind of magic. We need to make sure that all of these tools are the least intrusive and effective methods possible.”

JetBlue responded to MacKenzie’s tweet explaining that passengers were not required to board biometrically and were informed through gate announcements. They also pointed out that they don’t have access to the customer photos and that they are deleted within two weeks.

Of course, this type of technology has been used widely outside of security too. Companies are training algorithms to recognise not only an identity but also emotions with far-reaching implications. Stanford University even managed to create software that could pinpoint someone’s sexuality with technology that could tell whether someone was heterosexual or gay using dating websites to verify decisions.

And then there is the business of your face — private companies looking to maximise information mining that includes tracking the movement of communities and observing behaviours.

But the Met Police, in this case, have been quick to point out that the technology they will use will not replace traditional policing. “This is a system which simply gives police officers a prompt suggesting that person over there may be the person you are looking for. It is always the decision of an officer whether or not to engage with someone.”

The genie cannot be put back in the box when it comes to sweeping surveillance.

However, the amount and type of information collected by private companies and public bodies should have limits. Facial technology can help to protect people, but it is early days when it comes to accountability and ethics.

Image via Unsplash.com

Read more: Why we need to talk about Facebook